Reflecting on Reflection, Refraction, and Image Capture

How we indirectly perceive and experience the world through a series of filters, sensors, and more

By Jack Fox Keen

“Don’t tell me the moon is shining; show me the glint of light on broken glass.”

- Anton Chekhov

Have you ever thought about how looking directly at the Moon is the closest we can get to looking at the Sun? Because we can’t look directly at the Sun–but we can enjoy the Moon’s reflection of its light. We can never look directly at ourselves either–we are dependent on mirrors, chrome, or still water to see a reflection, but it’s always an indirect representation of who we are. And how can we trust what we see in the mirror? Imagine if we spent our whole lives looking at ourselves in fun house mirrors at the carnival. How would we be able to determine that this is not reality? Every reflection we’d have seen would have been distorted. In fact, we are already seeing this with Zoom dysmorphia–we spend more time looking at ourselves through a webcam than we do looking in a mirror, and this affects how we perceive ourselves.

Similarly, we cannot capture what we see directly either. When we take a picture on our phone, there’s a whole slew of processes that must occur between the light reflecting on the scenery itself and the final output of a digital photo.. As outlined in Digital Image Forensics, the two most important aspects of image capture are the lens and the sensor, but as the following diagram shows, these are certainly not the only aspects.

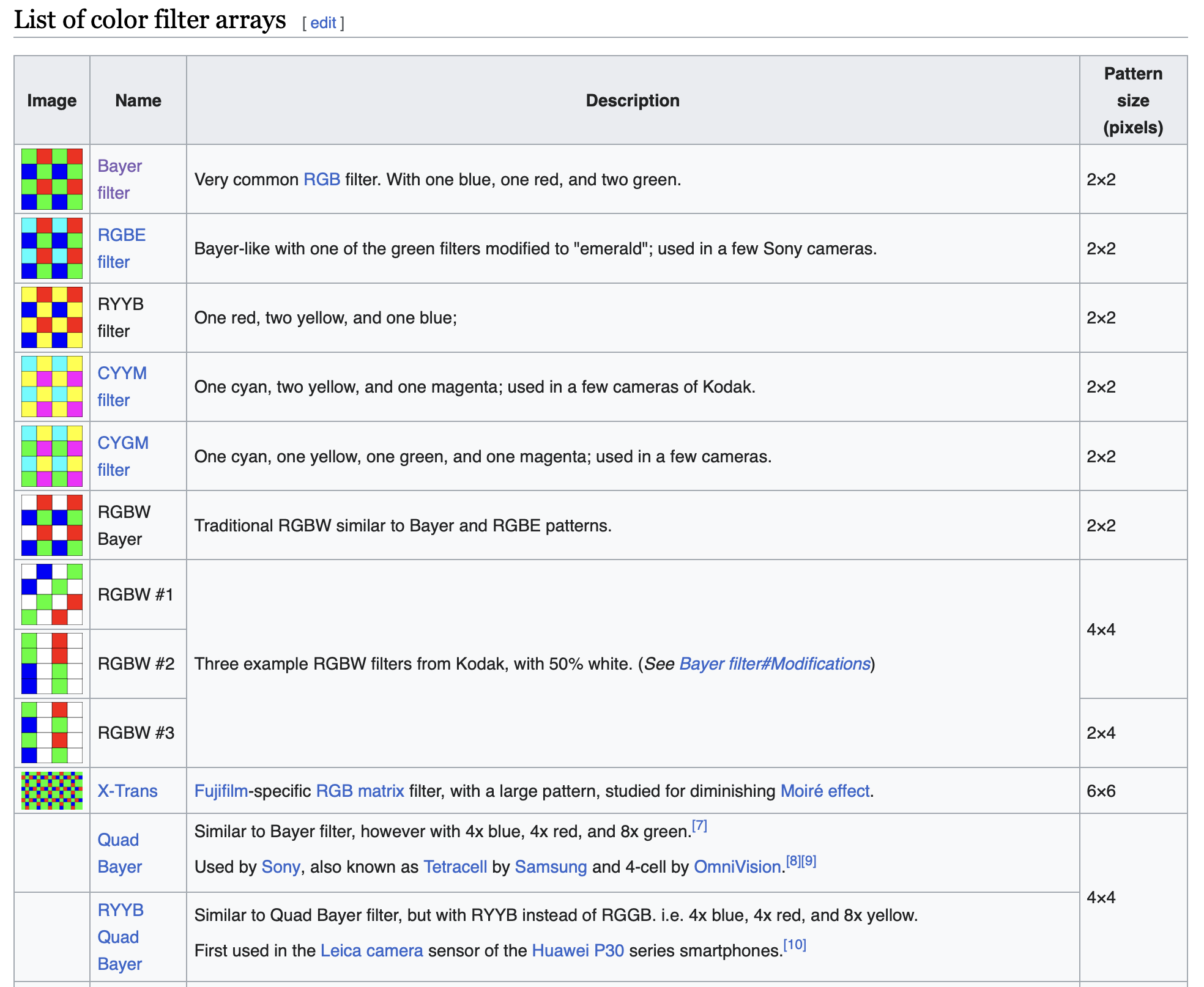

First, we point our lens at a scene and it captures the light reflected off of matter in that scene–a person, a tree, etc. From the lens, the light is passed through a Color Filter Array (CFA), because most light-sensors cannot distinguish wavelengths of color. The most common CFA, called the Bayer filter, utilizes the human eye’s natural ability to absorb wavelengths of green, thus there are twice as many green filters as red or blue ones (convenient for the ProofMode and Guardian Project logos!).

This right here is a unique component of the philosophy of perception. We have biological limits in the wavelengths we can see, which determines the architecture of the cameras we develop. We create tools based on our abilities and inabilities which shape our reality. This also plays into thoughts about Design Justice, where we explore how design can and should be used to uplift the most marginalized in our society, by creating infrastructures which are accessible for everyone. For an analog example in physical infrastructure, we can see that by building ramps and doors wide-enough for wheelchairs, everyone benefits and no one is hindered by these modifications.

It should be noted that the Bayer filter is just one of many possible color filter arrays.

The color subsampling of CFA results in aliasing, which is the overlapping of frequency components. Such overlaps can lead to distortions or unwanted artifacts in the resulting image. Yet another filter, an optical anti-aliasing filter, must be added between the image sensor and the lens to reduce these overlaps.

Furthermore, each pixel of the sensor is behind a color filter. The color filter outputs the raw intensity of one of the three colors, creating the need for an algorithm to estimate the color levels for the other color components. In a process called “demosaicing,” the missing information is filled based on the chosen mathematics for multivariate interpolation. However, the interpolations are variable as well! We can choose from among the simple nearest neighbor interpolation, to the more complex bicubic interpolation, among others.

Then there is post processing and image compression, both of which have various forms as well. For image compression, it is common to use a Discrete Cosine Transform, yet there are variations among this transformation as well, as shown in the following image. Finally the images are quantized according to a quantization table which also varies among cameras.

It is clear that even an image captured on a phone is an abstraction of an abstraction, a reflection of a reflection. Our resulting image is the product of multiple sensors, filters, and algorithms. On top of that, there are so many variations of each of these to choose from, that the possible permutations are staggering.

So what does all this mean for reality? How can we “prove” our perceptions? If the images we capture on our camera phones are an amalgamation of interpolations and estimations, how do we trust it? This hasn’t even begun to scratch the surface of AI-enhanced cameras, each with their own sets of underlying training data sets, rules, and algorithms. Coming full circle, we have seen the effects of AI artificially adding details to zooms on the Moon, when the Samsung camera in question did not actually capture that level of detail through the sensors.

I don’t know if I have any answers for existentialism, or ideas on how to prove or disprove that we’re all living in a simulation. However, I take solace in knowing that the Moon reflects the Sun, and is able to provide light in the dark, despite not generating light itself. Likewise, the abstractions of a scene, the interpolation of pixels, can also provide information and guide us through the shadowy parts of reality. In fact, digital image forensics does just that, as each model of camera has a distinctive combination of these varying features. It can be overwhelming that there is so much variability–but without this variability, we wouldn’t have unique identities. By capturing these different combinations of information, ranging from sensor data captured by ProofMode, to comparing metadata in ProofCheck, we can provide a reasonable chain of custody for events. While the burden of “proof” does indeed remain a burden to bear, we can provide an accumulation of data points to lift the burden and uplift the evidence in a chain of custody.

The predecessor of ProofMode, CameraV, provided an option to uniquely fingerprint the camera sensor with five boring photos. Not only is the camera model unique, but the sensors themselves can be unique. This is a feature we can actually integrate into ProofMode to help establish identity and fingerprint the camera lens. We may not be able to recreate the scenery, but we can shed light on the conduit of reality that created the resulting image. We do not claim to have the perfect solution to countering accusations of deep fakes, but we are utilizing all the tools at our disposal, embracing them for creativity and narrative, not just forgery.

“Hypotheses are nets: only he who casts will catch.”

-Novalis